SecureBytes NOC Stack

The observability layer for the homelab. Every host runs Node Exporter, scraped by Prometheus on a sixty-second interval. Grafana fronts it with dashboards spanning host health, memory, disk, and network throughput across the entire cluster.

What it covers

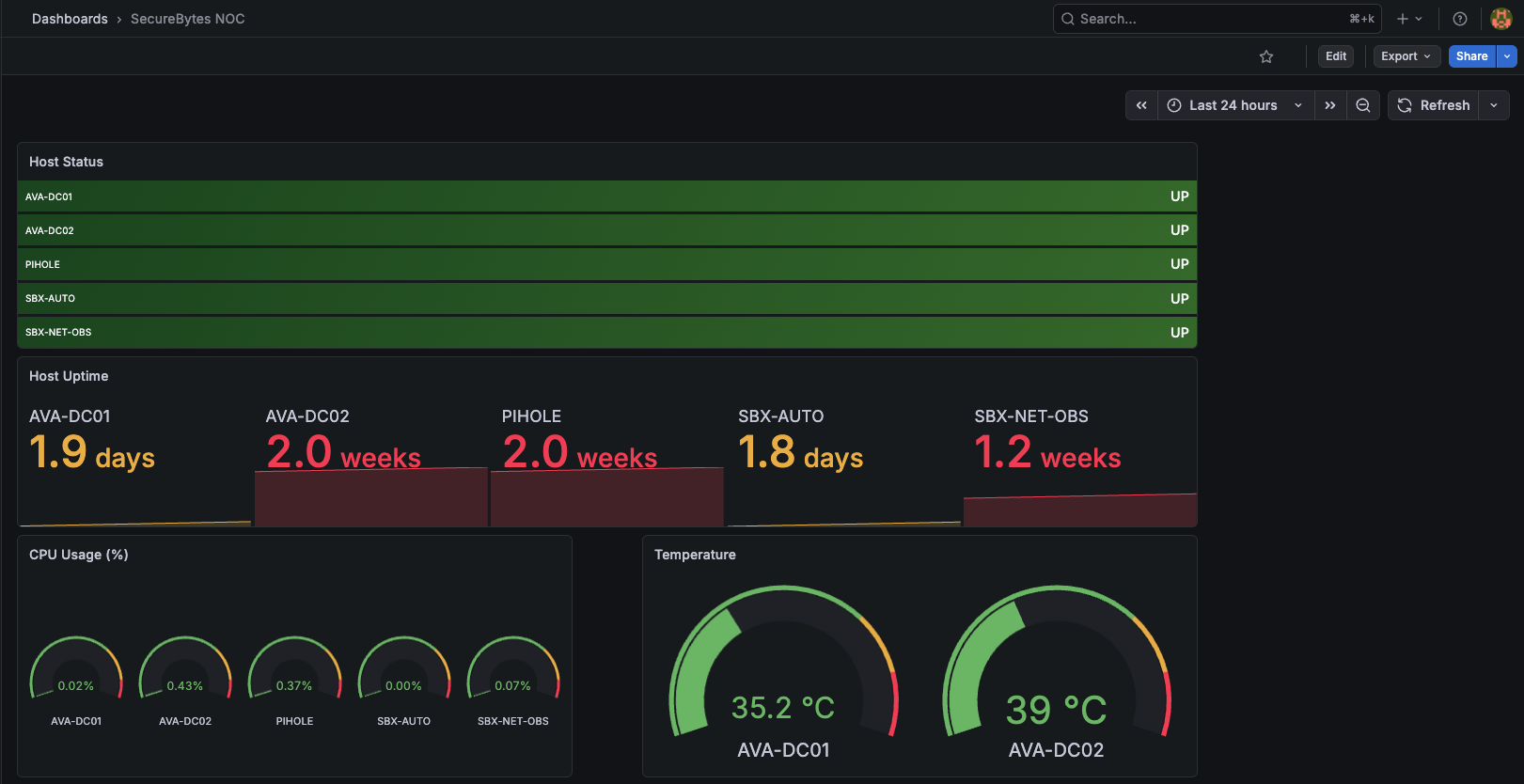

Three panel groups, one consolidated dashboard:

Host layer — uptime, CPU, memory, disk, and temperature for every node and container. Status rows turn green when a host responds to scrape, red when it doesn’t. Uptime panels color-grade so I can spot which hosts have rebooted recently without reading the numbers.

Resource layer — memory used and percentage, disk at root, all stacked side-by-side. Comparing lightweight LXCs against the heavy compute boxes is a glance, not a calculation.

Network layer — inbound and outbound throughput per host, plus separate panels for LAN traffic (internal hopping between containers) and WAN traffic (egress through the firewall). Useful for catching a runaway service hammering the network before it shows up as a complaint elsewhere.

Stack

- Prometheus scraping every host on 60s intervals

- Node Exporter on every Proxmox node, every LXC, every VM

- Grafana with custom dashboard JSON (one consolidated overview, edit-friendly variables)

- ntfy.sh push notifications wired through Alertmanager rules

- Docker Compose for the Prometheus + Grafana services themselves

Design decisions worth naming

Node Exporter on everything, no exceptions. Cost is ~10MB of RAM per host. The value is that nothing is invisible when something behaves weirdly — the data is already there. Curating “what’s worth monitoring” up-front is premature optimization for a homelab.

60-second scrape interval. Faster intervals (15s, 5s) sound better but quintuple storage cost without changing how I actually use the data. 60s is fine for everything except acute incidents, and during acute incidents I’m on the host directly with top.

One consolidated dashboard, not twelve specialty ones. I tried separating by category early on. Switching context between dashboards broke the muscle memory — every glance became a navigation problem. One dashboard with everything, scroll to find what you need. Boring, but it works.

What’s next

- Loki for log aggregation so traces, metrics, and logs share one query surface

- Alertmanager rules tuned by severity — currently every alert is the same level, which trains me to ignore the low-priority ones

- Sanitized dashboard JSON pushed to a clean public repo

Field notes

The original public repo for this stack was deleted during a May 2026 security audit. Dashboard JSON exports were embedding the data source URL field — which contained internal IPs — into commit history. The work itself wasn’t the problem; the publishing workflow was. Tightened the dual-repo discipline (private internal source → sanitized public mirror) and the next push will run through that gate. The screenshots above are the visible artifact in the meantime.

Stack